- MIT study finds fine-tuned AI models can generate high-precision commands for space missions.

- The researchers demonstrated that models like Llama-2 can generate accurate thrust commands across various control tasks, including orbital transfer and lunar landing.

- The models required less training data than traditional neural networks and generalized better to unseen scenarios, though with trade-offs in precision for some tasks.

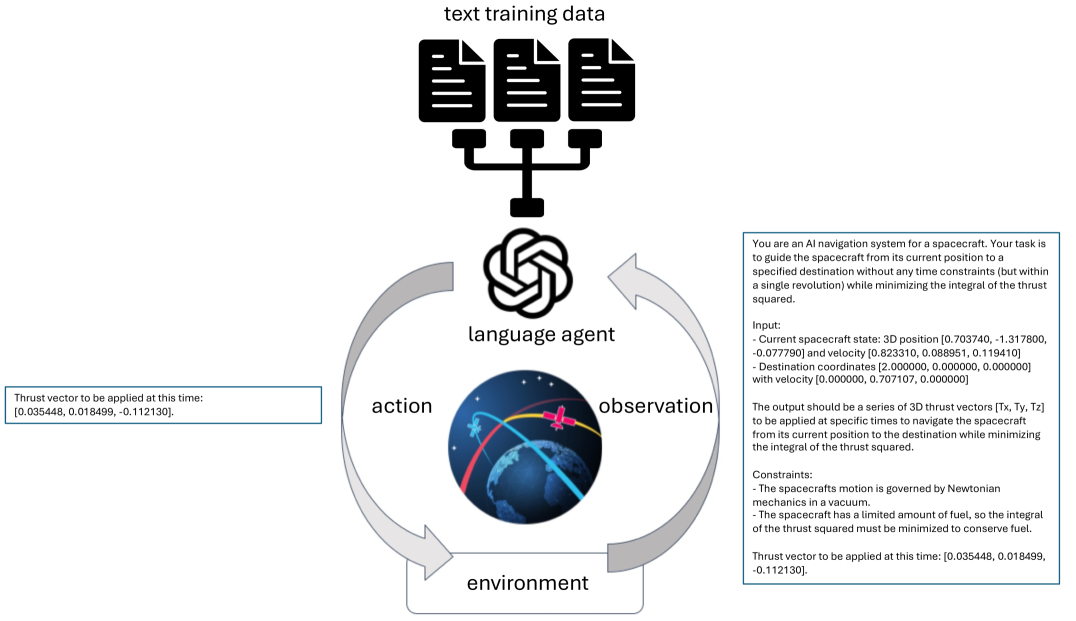

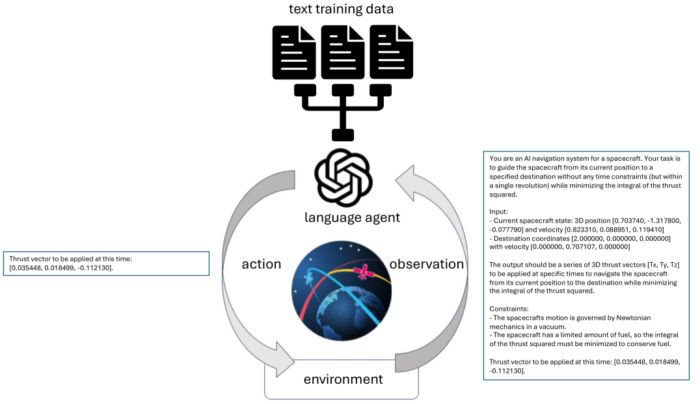

- Image: Illustration of the training and control process used in this work.

Large language models, best known for writing essays and answering chat prompts, can also steer spacecraft through space. That’s the conclusion of a new study from MIT and collaborators, which shows that fine-tuned foundation models can control simulated spacecraft with high precision and minimal training data.

The study, posted on the pre-print server arXiv recently, demonstrates that models like Meta’s Llama-2 can generate multi-dimensional thrust vectors — up to 10 significant digits in accuracy — to guide spacecraft in complex scenarios such as orbital transfers, lunar landings and cislunar navigation. Researchers tested models with 7 to 13 billion parameters and found that after fine-tuning, these systems performed comparably to traditional control algorithms — and in some cases, even outperformed them in robustness.

Led by Enrico Zucchelli and colleagues at MIT’s Department of Aeronautics and Astronautics, the work suggests that AI models trained primarily on language could eventually serve as general-purpose spacecraft controllers, capable of adapting to a variety of in-flight tasks with minimal reconfiguration.

Versatile AI for Space Guidance

The researchers focused on four benchmark problems: a toy 3D spring system, low-thrust orbital transfer, powered descent for landing and trajectory control in the Earth-Moon system using a three-body dynamic model. In each case, a language model was fine-tuned using a small dataset of state-action pairs — position, velocity, and thrust vectors — to predict control actions from text-based prompts that simulate spacecraft telemetry.

For example, a model might be prompted with a spacecraft’s current position and velocity, and asked to return the next thrust vector needed to reach a target orbit using minimal fuel. The models, initially trained on internet text, were fine-tuned using supervised learning to generate outputs as precise numerical commands.

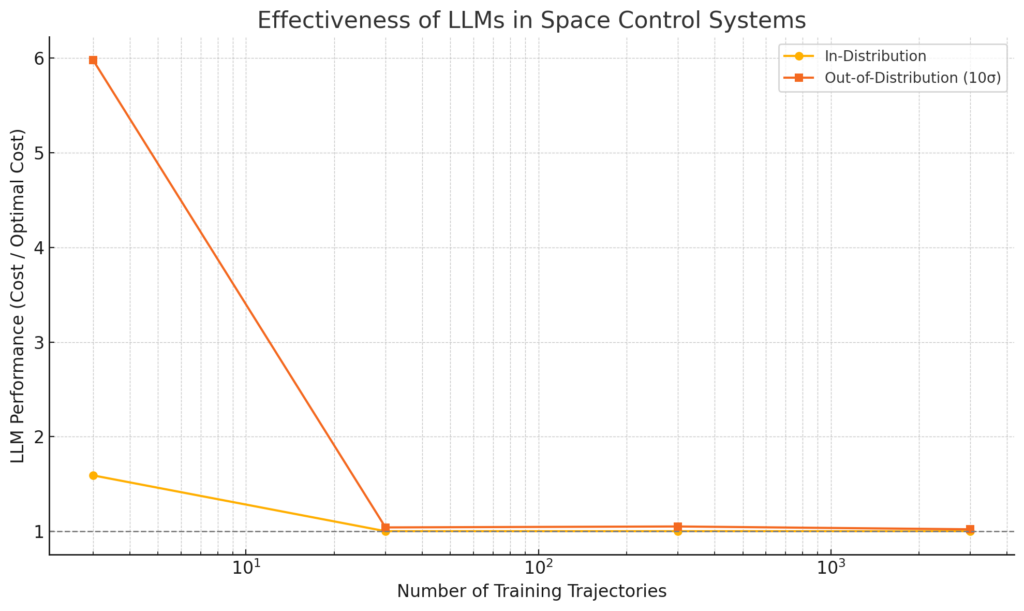

The results showed that LLMs could learn control behaviors using fewer examples than typical deep learning approaches. In one case, a Llama-2 model trained on data from just 30 sample trajectories could match the performance of traditional linear-quadratic regulators.

In another case involving orbital transfers — where a spacecraft must change altitude, speed, and inclination within a single orbit — the fine-tuned models achieved success rates of 99%, outperforming traditional optimization techniques that only succeeded 83% of the time under the same conditions.

Less Data, More Generalization

The researchers emphasize the data efficiency of fine-tuned language models. Compared to deep neural networks, which often require thousands of samples to generalize effectively, the LLMs used in this study could generalize to new scenarios with as little as 1/10th of the data.

This generalization held even when the models were tested with starting conditions outside the training distribution. For biased initial states, the LLM-controlled trajectories still completed the mission with higher reliability than numerical optimizers, which often failed to converge.

Another key finding is that the same language model can be fine-tuned for multiple control tasks without major performance loss. One LLM trained for both orbital transfer and landing achieved similar results to specialized models, suggesting future spacecraft could rely on a single onboard AI system for multiple phases of a mission.

Technical Strategy and Fine-Tuning

The models were fine-tuned using a method called low-rank adaptation (LoRA), which reduces the memory and computation burden by compressing the weight updates into smaller matrices. Training was performed on consumer-grade GPUs, showing that the method is accessible without supercomputing infrastructure.

Prompts were carefully designed to encode the spacecraft state and mission objectives as structured text. The language model then returned thrust vectors embedded within a text response. Because the model outputs are strings, researchers used consistent formatting and pattern-matching to extract the control values.

In each scenario, performance was evaluated by comparing the AI-generated trajectories with those produced by classical methods, such as linear quadratic regulators, indirect optimal control, or convex optimization solvers. While LLMs sometimes delivered less precise results, they showed better resilience in difficult or poorly conditioned problem instances.

Limitations and Trade-offs

Despite the promising results, the models are not perfect replacements for traditional control systems. In convex problems like powered descent, which are sensitive to precision and constraints, the LLMs showed larger errors and more variability than solvers that guarantee convergence.

The models also trade off accuracy for robustness. For example, while an LLM might produce a trajectory that reaches a target, it may violate secondary constraints like trajectory smoothness or fuel-optimality. That trade-off could be acceptable for early-stage guidance or contingency planning but may not meet the standards for final-stage maneuvers without additional validation.

The system also requires structured training data. Fine-tuning a model for real-world use would depend on access to high-fidelity simulation environments and accurate control solutions to use as ground truth.

Toward a General Spacecraft Controller

Still, the results point toward a new direction in spacecraft autonomy. The researchers argue that LLMs’ ability to generalize, learn from small datasets, and output structured language make them ideal for flexible mission operations—especially in settings where reprogramming traditional controllers is infeasible.

The long-term vision is a single onboard AI that can be prompted with high-level commands in natural language — e.g., “land safely at the designated site using minimal fuel” — and autonomously determine the control strategy.

Future work will focus on extending the approach to more realistic physics, incorporating safety constraints, and integrating the models into full mission pipelines. The team also plans to explore multimodal models that incorporate visual and inertial inputs, potentially enabling AI pilots for uncrewed missions beyond Earth orbit.

The study was conducted by Enrico M. Zucchelli, Di Wu, Julia Briden, Christian Hofmann, Victor Rodriguez-Fernandez, and Richard Linares. The lead institution is the Massachusetts Institute of Technology, with additional contributions from Universidad Politécnica de Madrid.

For a more technical look at the study, with details that this summary story can’t provide, please read the study on arXiv here. Pre-print servers offer researchers a way to quickly disseminate studies for informal feedback, however, they are not officially peer-reviewed, an important part of the scientific process.

Share this article: